How to Measure AI ROI for a Small Business

AI should not be judged by how impressive a demo feels. It should be judged by whether the business gets work done faster, makes better decisions, catches problems earlier, or creates revenue opportunities that would otherwise be missed. For a small business owner, that means AI ROI has to be practical enough to measure without hiring an analytics department.

The mistake most companies make is measuring AI like a novelty. They count prompts, outputs, or hours spent experimenting. Those numbers are easy to collect, but they do not prove the tool made the business healthier. A useful AI system needs a scorecard tied to the workflow it is supposed to improve.

Start with the job, not the model

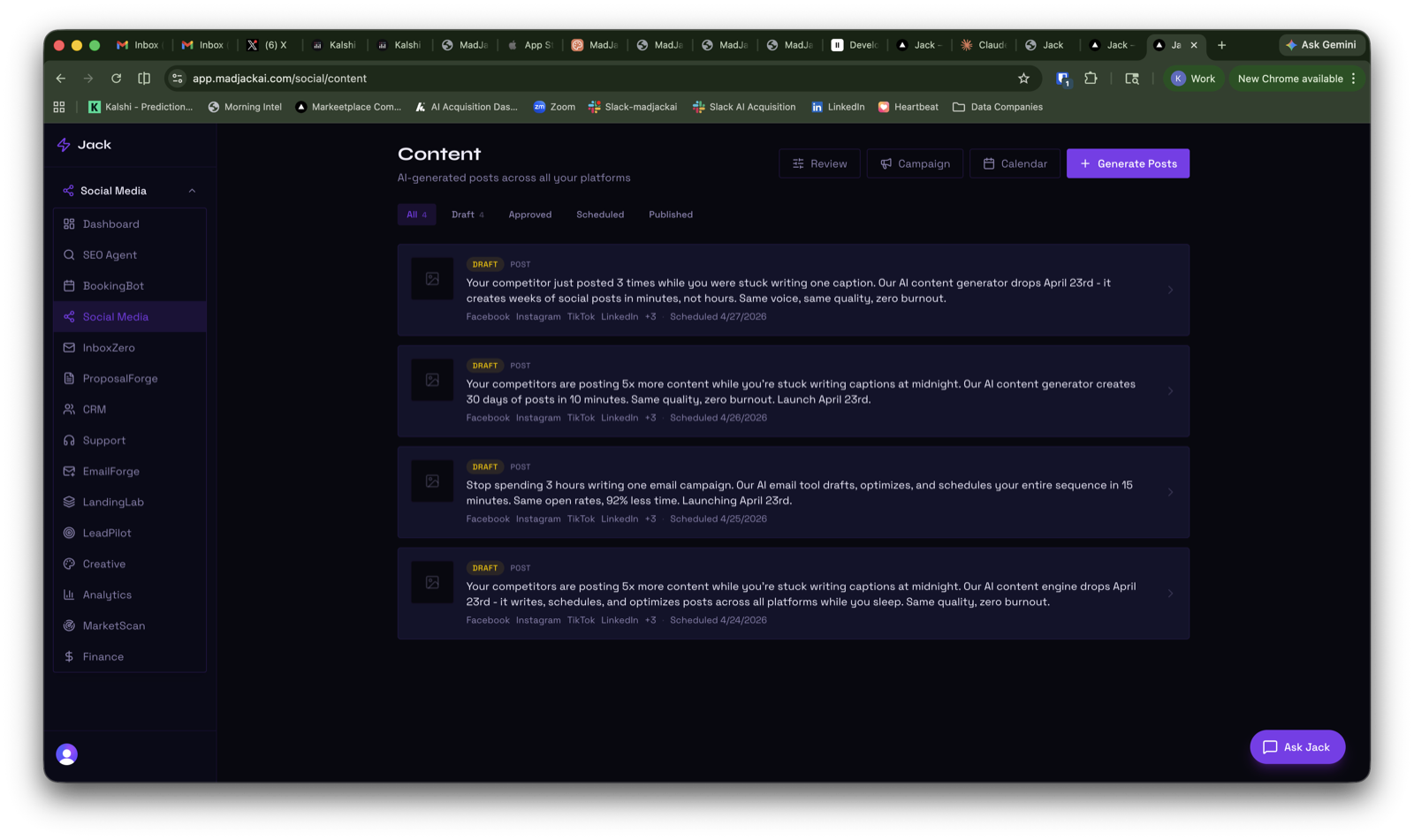

Before choosing an AI tool, write down the job it is supposed to perform. A SEO tool might crawl pages, find missing meta descriptions, draft fixes, and verify that the score improved. A social tool might generate posts, queue approvals, schedule content, pull comments, and report what was published. A support tool might answer approved questions and escalate anything uncertain.

That job description becomes the measurement plan. If the tool is supposed to draft customer replies, measure how many useful drafts were created, how many were approved, how much editing they needed, and whether response time improved. If the tool is supposed to monitor broken integrations, measure how many issues it caught before the owner found them.

Use four ROI buckets

Most AI ROI fits into four simple buckets: time saved, revenue created, risk reduced, and quality improved. A single workflow might touch more than one, but separating them keeps the conversation honest.

- Time saved: hours removed from repetitive work, reporting, cleanup, drafting, review prep, scheduling, or research.

- Revenue created: leads recovered, proposals sent faster, content published consistently, ad tests launched with better preparation, or follow-ups that would have been missed.

- Risk reduced: broken integrations caught, failed tasks escalated, compliance-sensitive actions held for approval, or bad automation prevented from reaching customers.

- Quality improved: clearer copy, better briefs, more complete records, cleaner inboxes, stronger SEO pages, or more consistent customer answers.

For example, an inbox assistant might save time and reduce risk. It saves time by classifying email and drafting replies. It reduces risk by keeping uncertain deletes, VIP senders, legal notes, and client issues in a review queue. Measuring both gives a better picture than simply saying the inbox was automated.

Set a baseline before changing the workflow

A baseline does not need to be perfect. It just needs to be honest. How many hours per week does the task take today? How many leads go unanswered? How often are reports late? How many posts get published in a normal month? How long does it take to respond to a support ticket? How many SEO issues sit unresolved?

Once that baseline exists, the AI tool can be judged against it. If a business normally publishes four social posts a month and the tool helps produce twelve approved posts without lowering quality, that is measurable progress. If the owner still has to spend the same number of hours fixing the system, the ROI is weaker than it looks.

Separate drafts from completed work

AI creates a lot of output. Output is not the same as completed work. A draft blog post is not published content. A proposed ad is not a campaign. A support answer is not resolved unless it answered the question accurately or escalated correctly. A task is not done until the result has been checked.

This is why verification matters. Jack should know what was requested, which agent owned it, whether the worker finished, what evidence proves the result, and what still needs approval or connection. Without that loop, the owner ends up checking dashboards and terminals again, which defeats the point.

Build a simple AI ROI scorecard

A useful scorecard can fit on one page. For each AI workflow, track the owner, the tool, the intended job, the baseline, the weekly result, the verification method, and the next action. This makes the system easier to manage as more tools are added.

- Workflow: what job is the AI tool responsible for?

- Baseline: what does the task cost today in time, money, delay, or missed opportunity?

- Output: what did the tool produce this week?

- Outcome: what actually changed in the business?

- Verification: how do we know the work was completed correctly?

- Next action: should Jack continue, adjust, escalate, or stop?

The scorecard prevents the business from confusing activity with value. It also gives the AI system something to improve against. If the tool knows which drafts were approved, which posts performed, which emails were corrected, and which alerts mattered, it can become more useful over time.

What good ROI looks like

Good AI ROI is usually not dramatic on day one. It often starts as a tighter operating loop: fewer missed tasks, faster drafts, cleaner queues, clearer reports, and better visibility into what is blocked. Over time, those gains compound. The business owner spends less time chasing work and more time making decisions.

For MadJack AI, the standard is simple: the tool should not leave the owner wondering whether anything happened. It should do the work it is allowed to do, show what needs approval, verify what was fixed, and report what is still blocked. That is how AI moves from interesting software to a real operating advantage.

Keep exploring MadJack AI

Browse the AI tools marketplace to see the products we are building, read about custom enterprise AI operating systems, or contact MadJack AI if you want help applying these ideas to your business.